-

Manage your Success Plans and Engagements, gain key insights into your implementation journey, and collaborate with your CSMsSuccessAccelerate your Purchase to Value by engaging with Informatica for Customer SuccessAll your Engagements at one place

-

A collaborative platform to connect and grow with like-minded Informaticans across the globeCommunitiesConnect and collaborate with Informatica experts and championsHave a question? Start a Discussion and get immediate answers you are looking forCustomer-organized groups that meet online and in-person. Join today to network, share ideas, and get tips on how to get the most out of Informatica

-

Troubleshooting documents, product guides, how to videos, best practices, and moreKnowledge CenterOne-stop self-service portal for solutions, FAQs, Whitepapers, How Tos, Videos, and moreVideo channel for step-by-step instructions to use our products, best practices, troubleshooting tips, and much moreInformation library of the latest product documents

-

Rich resources to help you leverage full capabilities of our productsLearnRole-based training programs for the best ROIGet certified on Informatica products. Free, Foundation, or ProfessionalFree and unlimited modules based on your expertise level and journeySelf-guided, intuitive experience platform for outcome-focused product capabilities and use cases

-

Library of content to help you leverage the best of Informatica productsResourcesMost popular webinars on product architecture, best practices, and moreProduct Availability Matrix statements of Informatica productsMonthly support newsletterInformatica Support Guide and Statements, Quick Start Guides, and Cloud Product Description ScheduleEnd of Life statements of Informatica productsMonitor the status of your Informatica services across regions

- Velocity

- Strategy

-

Solutions

- Stages

-

More

-

Manage your Success Plans and Engagements, gain key insights into your implementation journey, and collaborate with your CSMsManage your Success Plans and Engagements, gain key insights into your implementation journey, and collaborate with your CSMsAccelerate your Purchase to Value by engaging with Informatica for Customer SuccessAll your Engagements at one place

-

A collaborative platform to connect and grow with like-minded Informaticans across the globeA collaborative platform to connect and grow with like-minded Informaticans across the globeConnect and collaborate with Informatica experts and championsHave a question? Start a Discussion and get immediate answers you are looking forCustomer-organized groups that meet online and in-person. Join today to network, share ideas, and get tips on how to get the most out of Informatica

-

Troubleshooting documents, product guides, how to videos, best practices, and moreTroubleshooting documents, product guides, how to videos, best practices, and moreOne-stop self-service portal for solutions, FAQs, Whitepapers, How Tos, Videos, and moreVideo channel for step-by-step instructions to use our products, best practices, troubleshooting tips, and much moreInformation library of the latest product documents

-

Rich resources to help you leverage full capabilities of our productsRich resources to help you leverage full capabilities of our productsRole-based training programs for the best ROIGet certified on Informatica products. Free, Foundation, or ProfessionalFree and unlimited modules based on your expertise level and journeySelf-guided, intuitive experience platform for outcome-focused product capabilities and use cases

-

Library of content to help you leverage the best of Informatica productsLibrary of content to help you leverage the best of Informatica productsMost popular webinars on product architecture, best practices, and moreProduct Availability Matrix statements of Informatica productsMonthly support newsletterInformatica Support Guide and Statements, Quick Start Guides, and Cloud Product Description ScheduleEnd of Life statements of Informatica productsMonitor the status of your Informatica services across regions

-

Data Cleansing

Data Governance & Privacy

Challenge

Poor data quality is one of the biggest obstacles to the success of many data integration projects. A study by the Gartner Group stated that the majority of currently planned data warehouse projects will suffer limited acceptance or fail outright. Gartner declared that the main cause of project problems was a lack of attention to data quality.

Moreover, once in the system, poor data quality can cost organizations vast sums in lost revenues. Defective data leads to breakdowns in the supply chain, poor business decisions, and inferior customer relationship management. It is essential that data quality issues are tackled during any large-scale data project to enable project success and future organizational success.

Therefore, the challenge is twofold: to cleanse project data so that the project succeeds, and to ensure that all data entering the organizational data stores provides for consistent and reliable decision-making

Description

A significant portion of time in the project development process should be dedicated to data quality, including the implementation of data cleansing processes. In a production environment, data quality reports should be generated after each data warehouse implementation or when new source systems are integrated into the environment. There should also be a provision for rolling back if data quality testing indicates that the data is unacceptable.

Informatica Data Quality delivers pervasive data quality to all projects, applications and stakeholders across the organization; thus providing high quality and trusted data for use in making critical business decisions. The Informatica platform provides column and rule-based profiling, scorecard creation and management, duplicate and bad records management as well as creation and management of reference data that is shared across all data quality processes. The Informatica platform can be used to profile data, standardize and parse data, standardize address data, identify and consolidate duplicate records, and share data quality rules across projects to provide accurate and consistent data at an enterprise level.

Concepts

Following are some key concepts in the field of data quality. These data quality concepts provide a foundation that helps to develop a clear picture of the subject data, which can improve both efficiency and effectiveness. The list of concepts can be read as a process, leading from profiling and analysis to consolidation.

Profiling and Analysis - Profiling is primarily concerned with metadata discovery and definition and the analysis of underlying data.

Parsing - the process of extracting individual elements within records, files or data entry forms to check the structure and content of each field and to create discrete fields devoted to specific information types. Examples include: name, title, company name, phone number and SSN.

Cleansing and Standardization - refers to arranging information in a consistent manner or preferred format. Examples include the removal of dashes from phone numbers or SSNs.

Enhancement - refers to adding useful (but optional) information to existing data or complete data. Examples include: sales volume, number of employees for a given business and zip+4 codes.

Validation - the process of correcting data using algorithmic components and secondary reference data sources to check and validate information. Example: validating addresses with postal directories.

Matching and de-duplication - refers to removing or flagging for removal redundant or poor-quality records where high-quality records of the same information exist. Use matching components and business rules to identify records that may refer, for example, to the same customer.

Consolidation - using the data sets defined during the matching process to combine all cleansed or approved data into a single consolidated view. Examples are building best record, master record or house-holding.

Using Informatica Data Quality in Data Projects

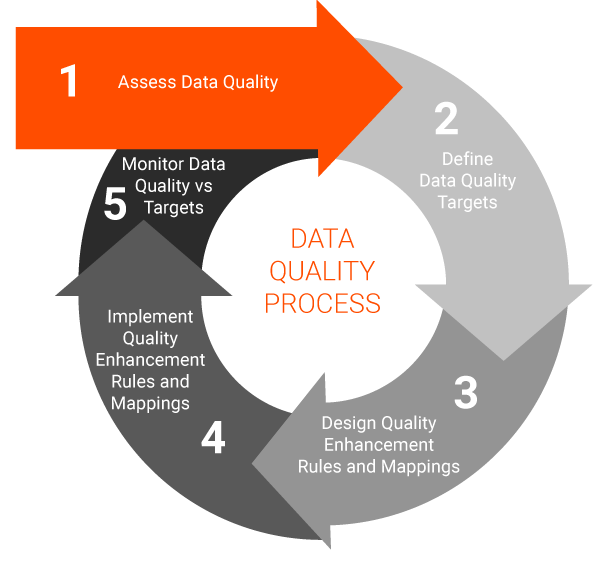

The following figure illustrates how data quality processes can function in a project setting:

In stage 1, work with the business or project sponsor to define metrics and analyze the quality of the project data according to agreed measures. This stage is performed in the Informatica platform, which enables the creation of versatile and easy to use scorecards to communicate data quality metrics to all interested parties.

In stage 2, verify the target levels of data quality for the business using the data quality measurements taken in stage 1. This must be done in accordance with project resources and scheduling.

In stage 3, use the Informatica platform to design the data quality rules, mappings, and projects to achieve the targets. Capturing business rules and testing the mapplets and mappings are also covered in this stage.

In stage 4, deploy the data quality rules and mappings. Stage 4 is the phase in which data cleansing and other data quality tasks are performed on the project data.

In stage 5, test and measure the results of the data quality rules and compare them to the initial data quality assessment to verify that targets have been met. If targets have not been met, this information feeds into another iteration of data quality operations in which the plans are tuned and optimized.

In a large data project, data quality processes of varying sizes and impact may be necessary at many points in the project plan.