-

Manage your Success Plans and Engagements, gain key insights into your implementation journey, and collaborate with your CSMsSuccessAccelerate your Purchase to Value by engaging with Informatica for Customer SuccessAll your Engagements at one place

-

A collaborative platform to connect and grow with like-minded Informaticans across the globeCommunitiesConnect and collaborate with Informatica experts and championsHave a question? Start a Discussion and get immediate answers you are looking forCustomer-organized groups that meet online and in-person. Join today to network, share ideas, and get tips on how to get the most out of Informatica

-

Troubleshooting documents, product guides, how to videos, best practices, and moreKnowledge CenterOne-stop self-service portal for solutions, FAQs, Whitepapers, How Tos, Videos, and moreVideo channel for step-by-step instructions to use our products, best practices, troubleshooting tips, and much moreInformation library of the latest product documents

-

Rich resources to help you leverage full capabilities of our productsLearnRole-based training programs for the best ROIGet certified on Informatica products. Free, Foundation, or ProfessionalFree and unlimited modules based on your expertise level and journeySelf-guided, intuitive experience platform for outcome-focused product capabilities and use cases

-

Library of content to help you leverage the best of Informatica productsResourcesMost popular webinars on product architecture, best practices, and moreProduct Availability Matrix statements of Informatica productsMonthly support newsletterInformatica Support Guide and Statements, Quick Start Guides, and Cloud Product Description ScheduleEnd of Life statements of Informatica productsMonitor the status of your Informatica services across regions

- Velocity

- Strategy

-

Solutions

- Stages

-

More

-

Manage your Success Plans and Engagements, gain key insights into your implementation journey, and collaborate with your CSMsManage your Success Plans and Engagements, gain key insights into your implementation journey, and collaborate with your CSMsAccelerate your Purchase to Value by engaging with Informatica for Customer SuccessAll your Engagements at one place

-

A collaborative platform to connect and grow with like-minded Informaticans across the globeA collaborative platform to connect and grow with like-minded Informaticans across the globeConnect and collaborate with Informatica experts and championsHave a question? Start a Discussion and get immediate answers you are looking forCustomer-organized groups that meet online and in-person. Join today to network, share ideas, and get tips on how to get the most out of Informatica

-

Troubleshooting documents, product guides, how to videos, best practices, and moreTroubleshooting documents, product guides, how to videos, best practices, and moreOne-stop self-service portal for solutions, FAQs, Whitepapers, How Tos, Videos, and moreVideo channel for step-by-step instructions to use our products, best practices, troubleshooting tips, and much moreInformation library of the latest product documents

-

Rich resources to help you leverage full capabilities of our productsRich resources to help you leverage full capabilities of our productsRole-based training programs for the best ROIGet certified on Informatica products. Free, Foundation, or ProfessionalFree and unlimited modules based on your expertise level and journeySelf-guided, intuitive experience platform for outcome-focused product capabilities and use cases

-

Library of content to help you leverage the best of Informatica productsLibrary of content to help you leverage the best of Informatica productsMost popular webinars on product architecture, best practices, and moreProduct Availability Matrix statements of Informatica productsMonthly support newsletterInformatica Support Guide and Statements, Quick Start Guides, and Cloud Product Description ScheduleEnd of Life statements of Informatica productsMonitor the status of your Informatica services across regions

-

Scoping the Initial Data Archive Project Using Informatica Supplied Accelerators

Cloud Data Warehouse & Data Lake

Challenge

The Informatica Data Archive product comes with Accelerators for Siebel, PeopleSoft, Deltek Costpoint, and Oracle E-Business Suite. Each of these application product families contains metadata for many different versions and with each version there are a large number of applications and within each application there can be many different entities. It can be challenging to determine which specific entities should be run for a particular application product family version and to keep the scope manageable for the initial data archive project. If every entity within a specific product family version were run then the project would have very little chance for success. Many of the entities would most likely not even relocate any data and for the entities that actually did relocate data, the volume would be so low that it would be a waste of time. The initial scope of the data archive project should be focused on a small handful of carefully chosen entities that will relocate the most data with the smallest amount of effort.

Description

DGA (Data Growth Analysis)

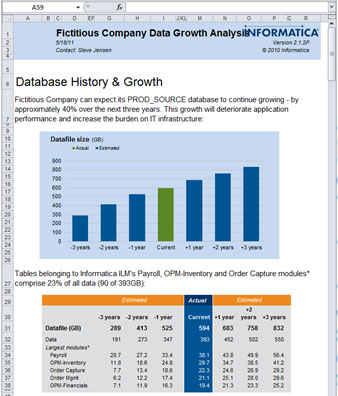

As part of the decision process for purchasing Informatica Data Archive, an Informatica Sales Engineer provides a data growth analysis script to run against a source database. The script generates an output file that is then returned to the Sales Engineer and processed and loaded into a spreadsheet, shown in the example below.

In addition to an overview of how the source database is projected to grow over the next three years, there is a breakdown by application/module that makes it easy to determine which applications hold the largest volumes of data. The spreadsheet also includes a breakdown of the 25 largest tables in the system, which can be helpful when an application contains many entities. In many cases where there are multiple entities within a particular application some entities will relocate data while others will not (because the functionality behind that entity is not being used within the application). The entities chosen for the initial scope should include all or most of the tables on the largest tables list. The Informatica Professional Services Consultant assigned to the implementation will be able to provide a list of entities for the tables in the list. The 25 largest tables section also identifies any large custom tables that may need to be added to an existing accelerator.

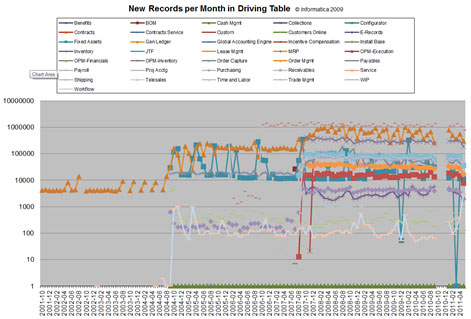

Often custom tables are left until a later phase of the data archive project, but if a table is very large and relates directly to an existing accelerator that is being run, then it may make sense to include it in the initial phase of the project. The data growth analysis spreadsheet also includes a growth chart that graphs the data volume for each application over time as shown below.

This chart can be used to identify applications that do not have data going far enough back in time to be included in the initial project as a breakdown by application will not provide enough information to determine if a particular application and some or all of the entities within that application should be included in the initial project. An application may have a large volume of data, but if the retention policy for that application is greater than the time the application has been adding large volumes of data to the system, then it would not be a good candidate for the initial project.

A final consideration for determining the entities to include in the initial archive project is the entity order or precedence. There is an Accelerator Reference document for each of the supported canned applications and if a particular entity needs to be run prior to another entity it will be noted in the guide. If the entity with the largest amount of data going back the longest in time has an entity that must be run prior to running it, then that entity will also need to be included in the initial project. This is one example where an entity may be included in the initial scope of the project even though running that entity will not relocate a significant amount of data. If the entity were not included, then the number of exceptions in the entity with the largest amount of data going back the longest in time would be high and defeat the purpose of including it in the initial scope. The complexity of the entities should also be considered when determining the scope for the initial project. If the entities are more complex, then less of them should be included (and vice versa), but a good rule of thumb would be that the initial project should be limited to between 2 and 5 applications or 5 and 10 entities.